- 09 Jul, 2018 3 commits

-

-

Ori Livneh authored

High system load can skew benchmark results. By including system load averages in the library's output, we help users identify a potential issue in the quality of their measurements, and thus assist them in producing better (more reproducible) results. I got the idea for this from Brendan Gregg's checklist for benchmark accuracy (http://www.brendangregg.com/blog/2018-06-30/benchmarking-checklist.html).

-

Federico Ficarelli authored

Adding myself to AUTHORS and CONTRIBUTORS according to guidelines.

-

Federico Ficarelli authored

* Set -Wno-deprecated-declarations for Intel Intel compiler silently ignores -Wno-deprecated-declarations so warning no. 1786 must be explicitly suppressed. * Make std::int64_t → double casts explicit While std::int64_t → double is a perfectly conformant implicit conversion, Intel compiler warns about it. Make them explicit via static_cast<double>. * Make std::int64_t → int casts explicit Intel compiler warns about emplacing an std::int64_t into an int container. Just make the conversion explicit via static_cast<int>. * Cleanup Intel -Wno-deprecated-declarations workaround logic

-

- 03 Jul, 2018 1 commit

-

-

Federico Ficarelli authored

-

- 28 Jun, 2018 1 commit

-

-

Yoshinari Takaoka authored

-

- 27 Jun, 2018 2 commits

-

-

Roman Lebedev authored

Inspired by these [two](https://github.com/darktable-org/rawspeed/commit/a1ebe07bea5738f8607b48a7596c172be249590e) [bugs](https://github.com/darktable-org/rawspeed/commit/0891555be56b24f9f4af716604cedfa0da1efc6b) in my code due to the lack of those i have found fixed in my code: * `kIsIterationInvariant` - `* state.iterations()` The value is constant for every iteration, and needs to be **multiplied** by the iteration count. * `kAvgIterations` - `/ state.iterations()` The is global over all the iterations, and needs to be **divided** by the iteration count. They play nice with `kIsRate`: * `kIsIterationInvariantRate` * `kAvgIterationsRate`. I'm not sure how meaningful they are when combined with `kAvgThreads`. I guess the `kIsThreadInvariant` can be added, too, for symmetry with `kAvgThreads`.

-

Dominic Hamon authored

* Use EXPECT_DOUBLE_EQ when comparing doubles in tests. Fixes #623 * disable 'float-equal' warning

-

- 18 Jun, 2018 1 commit

-

-

Roman Lebedev authored

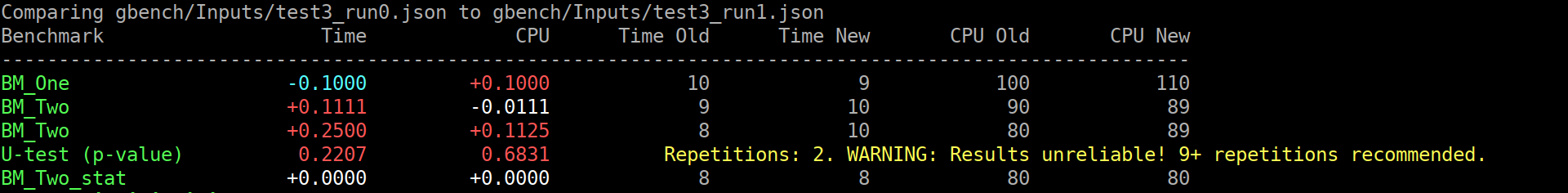

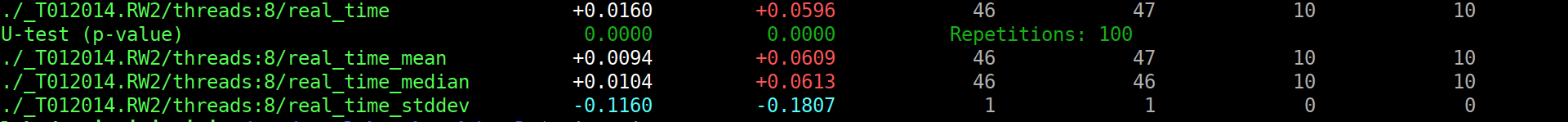

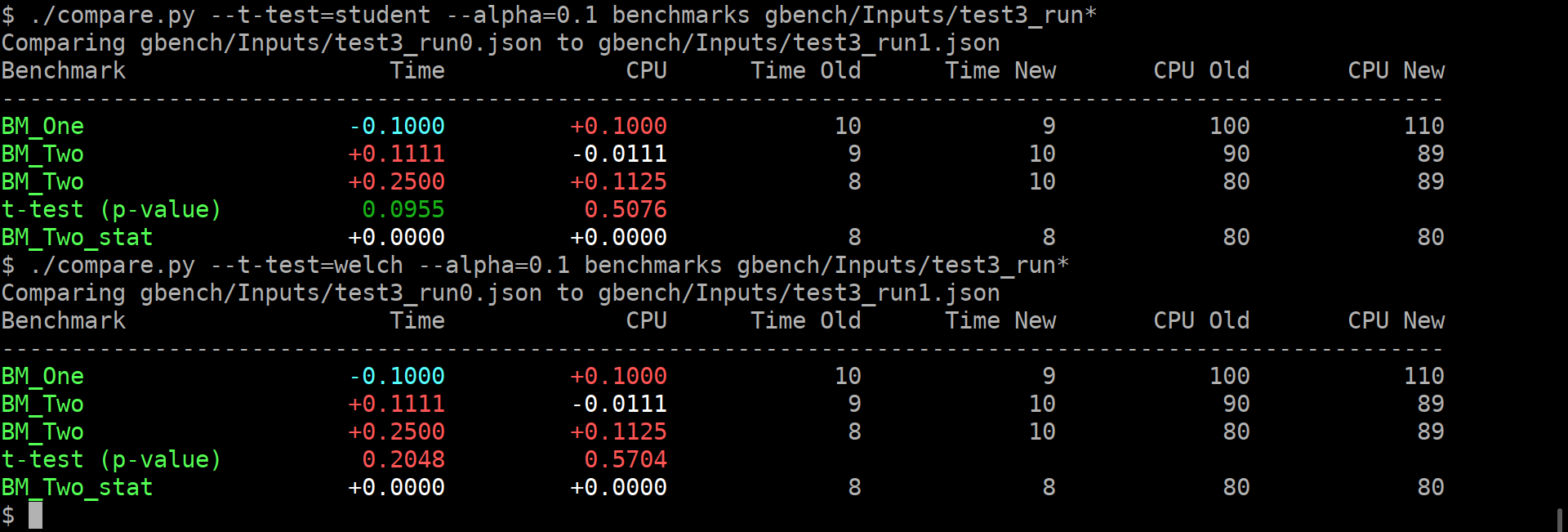

As previously discussed, let's flip the switch ^^. This exposes the problem that it will now be run for everyone, even if one did not read the help about the recommended repetition count. This is not good. So i think we can do the smart thing: ``` $ ./compare.py benchmarks gbench/Inputs/test3_run{0,1}.json Comparing gbench/Inputs/test3_run0.json to gbench/Inputs/test3_run1.json Benchmark Time CPU Time Old Time New CPU Old CPU New -------------------------------------------------------------------------------------------------------- BM_One -0.1000 +0.1000 10 9 100 110 BM_Two +0.1111 -0.0111 9 10 90 89 BM_Two +0.2500 +0.1125 8 10 80 89 BM_Two_pvalue 0.2207 0.6831 U Test, Repetitions: 2. WARNING: Results unreliable! 9+ repetitions recommended. BM_Two_stat +0.0000 +0.0000 8 8 80 80 ``` (old screenshot)  Or, in the good case (noise omitted): ``` s$ ./compare.py benchmarks /tmp/run{0,1}.json Comparing /tmp/run0.json to /tmp/run1.json Benchmark Time CPU Time Old Time New CPU Old CPU New --------------------------------------------------------------------------------------------------------------------------------- <99 more rows like this> ./_T012014.RW2/threads:8/real_time +0.0160 +0.0596 46 47 10 10 ./_T012014.RW2/threads:8/real_time_pvalue 0.0000 0.0000 U Test, Repetitions: 100 ./_T012014.RW2/threads:8/real_time_mean +0.0094 +0.0609 46 47 10 10 ./_T012014.RW2/threads:8/real_time_median +0.0104 +0.0613 46 46 10 10 ./_T012014.RW2/threads:8/real_time_stddev -0.1160 -0.1807 1 1 0 0 ``` (old screenshot)

-

- 07 Jun, 2018 1 commit

-

-

Dominic Hamon authored

Fixes #608

-

- 06 Jun, 2018 1 commit

-

-

Marat Dukhan authored

list(FILTER ...) is a CMake 3.6 feature, but benchmark targets CMake 2.8.12

-

- 05 Jun, 2018 2 commits

-

-

Sergiu Deitsch authored

-

Marat Dukhan authored

* Fix compilation on Android with GNU STL GNU STL in Android NDK lacks string conversion functions from C++11, including std::stoul, std::stoi, and std::stod. This patch reimplements these functions in benchmark:: namespace using C-style equivalents from C++03. * Avoid use of log2 which doesn't exist in Android GNU STL GNU STL in Android NDK lacks log2 function from C99/C++11. This patch replaces their use in the code with double log(double) function.

-

- 01 Jun, 2018 1 commit

-

-

BaaMeow authored

* format all documents according to contributor guidelines and specifications use clang-format on/off to stop formatting when it makes excessively poor decisions * format all tests as well, and mark blocks which change too much

-

- 30 May, 2018 1 commit

-

-

Dominic Hamon authored

Specifically some iOS targets.

-

- 29 May, 2018 6 commits

-

-

Dominic Hamon authored

-

Eric authored

As @dominichamon and I have discussed, the current reporter interface is poor at best. And something should be done to fix it. I strongly suspect such a fix will require an entire reimagining of the API, and therefore breaking backwards compatibility fully. For that reason we should start deprecating and removing parts that we don't intend to replace. One of these parts, I argue, is the CSVReporter. I propose that the new reporter interface should choose a single output format (JSON) and traffic entirely in that. If somebody really wanted to replace the functionality of the CSVReporter they would do so as an external tool which transforms the JSON. For these reasons I propose deprecating the CSVReporter.

-

Dominic Hamon authored

-

Dominic Hamon authored

-

Roman Lebedev authored

The first problem you have to solve yourself. The second one can be aided. The benchmark library can compute some statistics over the repetitions, which helps with grasping the results somewhat. But that is only for the one set of results. It does not really help to compare the two benchmark results, which is the interesting bit. Thankfully, there are these bundled `tools/compare.py` and `tools/compare_bench.py` scripts. They can provide a diff between two benchmarking results. Yay! Except not really, it's just a diff, while it is very informative and better than nothing, it does not really help answer The Question - am i just looking at the noise? It's like not having these per-benchmark statistics... Roughly, we can formulate the question as: > Are these two benchmarks the same? > Did my change actually change anything, or is the difference below the noise level? Well, this really sounds like a [null hypothesis](https://en.wikipedia.org/wiki/Null_hypothesis), does it not? So maybe we can use statistics here, and solve all our problems? lol, no, it won't solve all the problems. But maybe it will act as a tool, to better understand the output, just like the usual statistics on the repetitions... I'm making an assumption here that most of the people care about the change of average value, not the standard deviation. Thus i believe we can use T-Test, be it either [Student's t-test](https://en.wikipedia.org/wiki/Student%27s_t-test), or [Welch's t-test](https://en.wikipedia.org/wiki/Welch%27s_t-test). **EDIT**: however, after @dominichamon review, it was decided that it is better to use more robust [Mann–Whitney U test](https://en.wikipedia.org/wiki/Mann–Whitney_U_test) I'm using [scipy.stats.mannwhitneyu](https://docs.scipy.org/doc/scipy/reference/generated/scipy.stats.mannwhitneyu.html#scipy.stats.mannwhitneyu). There are two new user-facing knobs: ``` $ ./compare.py --help usage: compare.py [-h] [-u] [--alpha UTEST_ALPHA] {benchmarks,filters,benchmarksfiltered} ... versatile benchmark output compare tool <...> optional arguments: -h, --help show this help message and exit -u, --utest Do a two-tailed Mann-Whitney U test with the null hypothesis that it is equally likely that a randomly selected value from one sample will be less than or greater than a randomly selected value from a second sample. WARNING: requires **LARGE** (9 or more) number of repetitions to be meaningful! --alpha UTEST_ALPHA significance level alpha. if the calculated p-value is below this value, then the result is said to be statistically significant and the null hypothesis is rejected. (default: 0.0500) ``` Example output:  As you can guess, the alpha does affect anything but the coloring of the computed p-values. If it is green, then the change in the average values is statistically-significant. I'm detecting the repetitions by matching name. This way, no changes to the json are _needed_. Caveats: * This won't work if the json is not in the same order as outputted by the benchmark, or if the parsing does not retain the ordering. * This won't work if after the grouped repetitions there isn't at least one row with different name (e.g. statistic). Since there isn't a knob to disable printing of statistics (only the other way around), i'm not too worried about this. * **The results will be wrong if the repetition count is different between the two benchmarks being compared.** * Even though i have added (hopefully full) test coverage, the code of these python tools is staring to look a bit jumbled. * So far i have added this only to the `tools/compare.py`. Should i add it to `tools/compare_bench.py` too? Or should we deduplicate them (by removing the latter one)?

-

Dominic Hamon authored

-

- 25 May, 2018 1 commit

-

-

Alex Strelnikov authored

* Add benchmark_main library with support for Bazel. * fix newline at end of file * Add CMake support for benchmark_main. * Mention optionally using benchmark_main in README.

-

- 24 May, 2018 2 commits

-

-

mattreecebentley authored

* Correct/clarify build/install instructions GTest is google test, don't obsfucate needlessly for newcomers. Adding google test into installation guide helps newcomers. Third option under this line: "Note that Google Benchmark requires Google Test to build and run the tests. This dependency can be provided three ways:" Was not true (did not occur). If there is a further option that needs to be specified in order for that functionality to work it needs to be specified. * Add prerequisite knowledge section A lot of assumptions are made about the reader in the documentation. This is unfortunate. * Removal of abbreviations for google test

-

Samuel Panzer authored

* Return a reasonable value from State::iterations() even before starting a benchmark * Optimize State::iterations() for started case.

-

- 14 May, 2018 1 commit

-

-

Deniz Evrenci authored

* Update AUTHORS and CONTRIBUTORS * split_list is not defined for assembly tests

-

- 09 May, 2018 1 commit

-

-

Nan Xiao authored

-

- 08 May, 2018 3 commits

-

-

Roman Lebedev authored

-

Roman Lebedev authored

-

php1ic authored

Git was being executed in the current directory, so could not get the latest tag if cmake was run from a build directory. Force git to be run from with the source directory.

-

- 03 May, 2018 1 commit

-

-

Sam Clegg authored

The old EMSCRIPTEN macro is deprecated and not enabled when EMCC_STRICT is set. Also fix a typo in EMSCRIPTN (not sure how this ever worked).

-

- 02 May, 2018 2 commits

-

-

Nan Xiao authored

-

Alex Strelnikov authored

Note, bazel only supports MSVC on Windows, and not MinGW, so linking against shlwapi.lib only needs to follow MSVC conventions. git_repository() did not work in local testing, so is swapped for http_archive(). The latter is also documented as the preferred way to depend on an external library in bazel.

-

- 01 May, 2018 1 commit

-

-

Alex Strelnikov authored

The benchmarks in the test/ currently build because they all include a dep on gtest, which brings in pthread when needed.

-

- 26 Apr, 2018 1 commit

-

-

Tim Bradgate authored

* Allow support for negative regex filtering This patch allows one to apply a negation to the entire regex filter by appending it with a '-' character, much in the same style as GoogleTest uses. * Address issues in PR * Add unit tests for negative filtering

-

- 23 Apr, 2018 2 commits

-

-

Yangqing Jia authored

-

Victor Costan authored

-

- 19 Apr, 2018 1 commit

-

-

Dominic Hamon authored

Before this change, we would report the number of requested iterations passed to the state. After, we will report the actual number run. As a side-effect, instead of multiplying the expected iterations by the number of threads to get the total number, we can report the actual number of iterations across all threads, which takes into account the situation where some threads might run more iterations than others.

-

- 12 Apr, 2018 1 commit

-

-

Dominic Hamon authored

* Ensure 64-bit truncation doesn't happen for complexity results * One more complexity_n 64-bit fix * Missed another vector of int * Piping through the int64_t

-

- 09 Apr, 2018 1 commit

-

-

Fred Tingaud authored

-

- 06 Apr, 2018 1 commit

-

-

Eric Fiselier authored

-

- 03 Apr, 2018 1 commit

-

-

Dominic Hamon authored

* Allow AddRange to work with int64_t. Fixes #516 Also, tweak how we manage per-test build needs, and create a standard _gtest suffix for googletest to differentiate from non-googletest tests. I also ran clang-format on the files that I changed (but not the benchmark include or main src as they have too many clang-format issues). * Add benchmark_gtest to cmake * Set(Items|Bytes)Processed now take int64_t

-